Resources

Video

This video will illustrate how to use the DAQMaster utility from Autonics to set up the Ethernet/IP coupler ARIO-C-EI with couple digital inputs slice as well as digital output slice.

This video will illustrate how to connect to the Beijer PLC XP325 using the BCS tool which is the Beijer development tool through Ethernet cable.

NITROSource PSA Nitrogen Generators use advanced nitrogen gas generation technology to deliver low cost, energy-efficient nitrogen gas on demand.

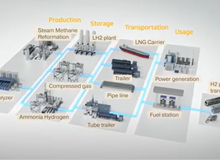

CC-Link IE TSN is the key to realizing real-time communication in manufacturing systems utilizing TCP/IP-compatible Ethernet‑based networks.

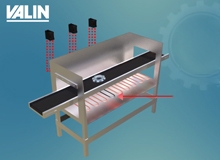

The samples to be analyzed are transported to the work station in a tray on a conveyor belt.

This video will illustrate how to set up an Autonics ARIO-C-EI Ethernet/IP Coupler to communicate to an Omron N Series PLC.

Revolutionize Battery Handling: Schunk's RCG-Series Magnetic Grippers

The entire spectrum of automation is utilized in the production of battery systems.

PATLITE introduces the WIO wireless system. This Bluetooth enabled system allows for remote indication and monitoring. Working as a pair, the transmitter and receiver combination allows an operator to trigger a light and notify personnel from up to 400 meters (with built in relay function).

The clogging of pump systems has been a problem for wastewater municipalities' Lift Pumping Stations for many years, and the Covid-19 pandemic brought an increase in the usage of wipes, along with an increase in the improper disposal of those wipes (oftentimes they are flushed down a toilet).

Using Mitsubishi’s GOT HMIs with their VFDs makes seeing the status and changing the settings much easier.

Configuring a Swivellink mounting arm for machine vision cameras, lights, sensors.

The welding node is an add-on feature in TMflow 1.88 designed for welding applications.

TM AI Cobot S Series, Smarter, Simpler, Safer and Super!

TM Robot can assemble three different types of encoder with PCBA. Triggered by a sensor, the PCBA is transferred from the previous station to our cobot.

When the workpiece moves to the appropriate position, TM Robot uses the built-in vision system for positioning to pick it up. Then our cobot separates the workpiece and places it in the tray for stacking.

TM AI Cobot - CNC Machine Tending Application ft.Valk Welding.

V2A039 TM AI Cobot - Cobot Machine Tending Solution ft.SINTA.

TM AI Cobot - Feeding System Application ft. Ars Automation

TM Palletizing Operator is an all-in-one solution exclusively designed for palletizing automation.

Automated Robotic Sanding ft. FerRobotics.

TM AI Cobot - Visual Inspection Product Traceability.

As Valin prepares for the holidays, we’d like to take some time to reflect on this past year and just how thankful we are for the relationships we share and how hopeful we feel for the future.

The Spectrex SharpEye 40/40 series is an exciting range of flame detectors that incorporates the very latest features and approvals for today's industries!

The global wine market has never been more competitive and customer expectations have never been higher. Facing a world of choices buyers turn to familiar brands for consistent quality taste and price.

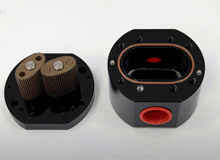

TopWorx™ DX-Series Controllers are certified for use in every world area. D-Series discrete valve controllers can survive in virtually any plant condition.

The e-RT3 Plus is a platform with durability, versatility, and practicality that is ideal for IoT and AI adoption at manufacturing sites.

The future is here: introducing the AI Cobot Techman Robot. Native AI enging with robot arm and vision.

This video will illustrate how to set up Internet/IP communication to communicate between IAI RCON Ethernet/IP Gateway to an Omron N series PLC using the IA-OS utility.

This video will illustrate how to set up Internet/IP communication to communicate between IAI RCON Ethernet/IP Gateway to an Omron N series PLC using the IAI Parameter Configuration Tool.

Check out Norgren's latest Transforming Tooling project: this customer's tool has 16 arms, and is able to move to multiple configurations!

In a changing world that is experiencing rapidly evolving demands for fulfillment customizations, Norgren is finding that their customers struggle to automate their systems to handle a high mix of products in palletizing and packaging operations.

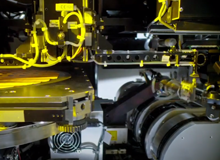

With mega fab construction accelerating around the globe, designers of new generation wafer processing equipment are following the lead of SEMI S2 and the European Machinery Directive by embracing functional safety standards like ISO-13849 for the design and integration of safety-related parts of control systems (SRP/CS) including the design of software.

Techman Robot is a leading brand of collaborative robots. We have the built-in vision and a native AI engine for robots to see.

This video illustrates how to set up the Baumer Vision System Smart camera using a VeriSens application suite.

Are you having trouble with your pneumatic valve banks on CVD, PVD, ETCH, and other 20+ year-old tools? Troubles such as EV failures and CDA leaks, obsolescence, and low airflow due to smaller Cv's? Are you tired of the midnight phone calls because your electric valves are failing? Well, Valin has the solution!

Introducing Cobot Intelligence's new standalone cabinet door sanding solution.

Macnaught manufactures highly accurate, robust industrial grade Flow Meters for clean process fluid applications. Macnaught is the largest global provider of Positive Displacement Oval Gear Flow Meters and has been since 1964.

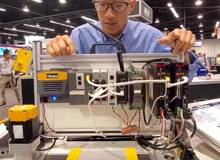

Valin and Mitsubishi Electric joined forces to bring the latest automation solutions to our customers in Arizona on August 7th. On board the mobile showroom customers saw the latest technology in PLCs, drives, CNCs, motion control, HMIs, energy monitoring and management, industrial robots, and connectivity for MES and ERP applications.

The versatile model A-1200, which can be programmed flexibly via IO-Link, is a pressure sensor without display. It is used in pressure monitoring and also as a PNP/NPN switch for process control. The sensor combines convenient handling with continuously reliable measurement results, with highest economic efficiency.

This video is a demonstration on how to setup IAI RCON Ethernet/IP Gateway to communicate with a PLC

The Kyntronics SMART Electro-Hydraulic Actuator (SHA), combines the best features of hydraulic power with the precision of servo control used in screw-type electro-mechanical actuators, without the inherent disadvantages of those approaches.

Introducing Parker's new FR Series Pressure Regulators. Parker's competitively priced FR Series ultra high purity regulators are perfect for controlling process gases in downstream point-of-use applications in semiconductor fabrication.

The ASCO Series RCS Redundant Control System is a pilot valve system that solves many of the challenges those working in the process industry experience.

A local public utility district has a freshwater collection tank that sometimes fills rapidly and other times slowly. At times the main pump could not keep up. Watch to see the solution.

Macnaught USA is pleased to announce our long awaited Explosion Proof / Flame Proof Oval Gear Flow Meter product line. Built on the legendary MX Series platform – accuracy remains at .5% of reading and .03% repeatability.

This video will illustrate how the Omron PLC works with a Beijer HMI model X2 base 7 which is the 7 inch screen. So what I have right here is I'm simulating the page on the IX developer.

This video illustrates how to set up the Beijer HMI, X2Base7 to communicate to an Omron C series PLC such as CP1L, CJ, CS1 and many other old C series PLC.

MT Series Turbine Flow Meters provide proven flow reliability and high accuracy for clean process fluids. All MT Series Turbine Flow Meters are manufactured in the USA and are individually calibrated with a tagged NIST Traceable K-Factor attached to each meter. Cost Effective Industrial Flow Measurement.

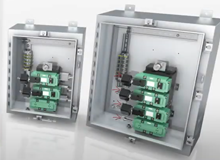

SolaHD™ power quality solutions equipment by Emerson is a comprehensive line of products that stretches from entrances to load points, to communications networks throughout facilities, making our total power quality solutions indispensable.

Enabling Engineering Breakthroughs that Lead to a Better Tomorrow.

This animation includes both Flowrox™ pinch valves and knife gate valves.

Flowrox™ solutions, entail more than 40 years of experience in flow and process control and elastomer technology.

In addition to providing solid inductive sensing, our newest inductive sensors deliver the condition monitoring and advanced IO-Link features of Balluff’s Smart Automation and Monitoring System (SAMS).

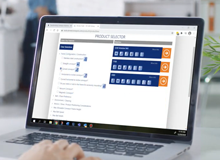

Welcome to Acromags Signal Conditioning Selection Guide Video. As a business with many helpful products to offer, we know it can be difficult to find which one you need.

Welcome to Acromags Signal Conditioning Selection Guide Video. As a business with many helpful products to offer, we know it can be difficult to find which one you need.

Acromag Signal Conditioning Selection Guide Video.

Welcome to Acromag's Signal Conditioning Selection Guide Video.

For decades engineers all over the world have had to rely on either cone and thread or welded tube fittings when working with high pressures or applications where permanent connections are required.

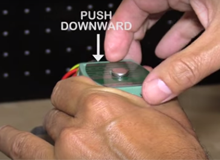

Introduction to Parker's installation tool for Phastite Permanent Ferrule Less Tube Fittings.

Parker's Phastite® Permanent Ferrule Less Tube Fitting is a breakthrough in tube connection systems; its innovative design concept eliminates the costly requirement of welding and combines quick installation with a single assembly process achieving a tube connector that can be used in applications up to 21,400 psi/1,470 bar.

The Parker R-max™ is a multi-functional system capable of integrating both stream switching and filtering into one unique compact assembly.

Innovations in the design of primary isolation valves and manifolds for mounting pressure instrumentation can deliver enormous advantages to both instrument and piping engineers, ranging from significantly enhanced measurement accuracy, to simpler installation and reduced maintenance.

Not all hardening processes are equal. Parker's Suparcase™ proprietary ferrule hardening process gives the differential hardness for superior grip and performance in various applications. Watch to discover Parker's invisible advantage.

Not all hardening processes are equal. Parker's Suparcase™ proprietary ferrule hardening process gives the differential hardness for superior grip and performance in various applications. Watch to discover Parker's invisible advantage.

Clara Moyano, Innovation Engineer - Material Science at Parker Hannifin’s Instrumentation Products Division Europe, describes & explains NORSOK, the standard developed by the Norwegian Petroleum Industry. She talks about NORSOK M650 and M630, focussing on the qualification of manufacturers of special material and material data sheets for piping.

Pitting resistance equivalent number (PREN) is a method that is widely used as a means of comparing the relative corrosion resistance of different steels. PREN is a theoretical way of comparing the pitting corrosion resistance of stainless steels based only on their chemical compositions.

Medium to high pressure cone and thread connections.

Clara Moyano, Innovation Engineer - Material Science at Parker Hannifin’s Instrumentation Products Division Europe, describes & explains why heat, chemical & mechanical properties, info on production batch, and provenance, can be crucial for the quality and service life of steel.

Clara Moyano, Innovation Engineer - Material Science at Parker Hannifin’s Instrumentation Products Division Europe talks about material selection and what to take into consideration.

Clara Moyano describes and explains the different characteristics of 316 stainless steel and 6Mo, in particular their mechanical properties, corrosion resistance, welding properties and lifetime.

Parker's high-performance two-piece bi-directional instrumentation Hi-Pro ball valve offers superior performance and reliability in some of the world's harshest environments.

Connecticut based Precision Sensors, Inc. (PSI) has emerged as a best-in-class supplier for the semiconductor and aerospace industries.

This video describes how and why the Parker Bestobell Cryogenic Valves contribute to the circular economy for industrial manufacturing. Increasingly frequent extreme weather events worldwide highlight the critical need to build a more sustainable future.

Parker Veriflo's products are optimized for the semiconductor industry, designed for use in process control regarding gas & chemical delivery systems for equipment manufacturers and end users.

Within the Semiconductor Industry, Parker's PFA & PTFE Products are designed for use in Ultra High-Purity (UHP) and corrosive chemical handling system, including semiconductor fab.

Welcome, to the Parker A-LOK twin ferrule fitting assembly video when using a preassembly tool.

Fully Integrated Tube Connections for Instrumentation Valves and Manifolds. How to reduce project costs and completion times through elimination of threaded connections within process instrumentation installations.

Achieving a leak-free connection in any environment is the Holy Grail for many piping and instrumentation engineers.

This training courses about safety and potential leakage causing unnecessary downtime at our end-user customer sites.

How to: In Parker's latest video on Process instrumentation, they look at how to safely remove a plugged monoflange in a process to instrument application. This video clearly shows the safe removal of a plugged monoflange using an integrated Parker Monoball solution to enable the process pipeline to continue operation.

This video is a tutorial on how to assemble tube clamps for small bore instrumentation grade tubes.

Learn how to safely remove a pressure instrument for calibration or in-situ calibration using Parker's Pro-Bloc modular double block and bleed valve.

Instrumentation Product Manager, Dave Edwards, details how to select the correct instrumentation tubing combinations for use with Parker A-LOK or CPI tube fittings using tube tables found in Parker Fittings, Materials and Tubing Guide.

Rollon’s Telescopic slides are robust while maintaining precision. They can handle the abusive life of reusable packaging automation with the accuracy needed for robotic inter

This video is an example of the Rollon automated storage application.

This video is an example of the Rollon generator application.

This video is an example of the Rollon automated fresh food storage application.

This video is an example of the Rollon generator slideout airport FBO and GSE application.

This video is an example of the Rollon microscope rail application.

This video is an example of the Rollon operating table base application.

This video is an example of the Rollon test tube filling application example.

This video is an example of the Rollon transfer application example.

This video is an example of the Rollon waterjet cutting application example.

This video is an example of the Rollon xray application.

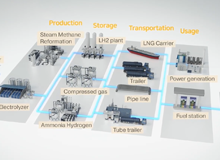

As the leading manufacturer in motion and control technologies, Parker offers a wide range of products suitable for use in the hydrogen transportation market. Parker A-LOK® two ferrule tube fittings are designed to achieve quality leak-free connections on board hydrogen-powered vehicles and are EC-79 Certified.

This video provides an overview of the new Parker Bestobell cryogenic thermal relief valve with integrated double ferrule A-LOK connections.

This video is about assembling the Hyferset for Two Ferrule compression fittings.

This video explains how you can ensure that your Hydrogen systems are selected with quality materials. As a source of clean energy, hydrogen significantly contributes to a more sustainable future. However, it can embrittle poor-quality steel materials, leading to unexpected component failures.

Parker's Hydrogen Solutions range includes products suited to very high pressures and low temperatures, KOLAS, KS ISO and EC79-compliant products and hydrogen service testing options.

With its EC79 Certificate(s) on a range of products and As the leading manufacturer in motion and control technologies, Parker supports the energy transition and safe deployment of hydrogen as an environmentally friendly alternative fuel source.

With the Industrial Internet of Things and digitization, opportunities to improve business performance are almost endless.

Emerson's Jan Grasedieck explains in this video how manufacturers can use IIoT solutions and analytics software to gain actionable insights to improve productivity and Overall Equipment Effectiveness.

In this video, IIoT experts explain the types of actionable insights Emerson can give you during one of their Digital Transformation workshops, providing guidance and potential outcomes to benefit your business.

In this video, Emerson's expert from their Fluid Control & Pneumatics Mobile Event explains how IIoT solutions enable customers to do predictive maintenance and improve throughput and energy efficiency.

In this Bringing the Bits Together video, Emerson's Amit Patel and Jason Maderic discuss how the TopWorx predictive valve maintenance solutions help customers navigate the world of Digital Transformation to improve areas around production, energy, emissions, reliability, and safety.

World's smallest class inverter with high functionality. Compact inverter with open network and functional safety functions.

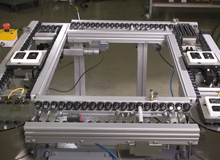

Dorner's precision edge roller pallet and tray handling conveyors provide efficient, non-contact zoning for small & light-load assembly automation applications. They feature a clean, open roller design and are ISO Class 4 approved for cleanrooms.

Dorner 2200 Series Precision Move Pallet Systems are modular, dual belt pallet systems for automation assembly.

Dorner FlexMove conveyors are available as completed conveyor systems for fast and simple, installation, or as parts for flexibility in design.

Dorner Flexmove Conveyors are ideal for part handling, tight spaces, buffering, accumulation and elevation changes. They're perfect for processing, packaging, assembly automation, medical, life sciences and more!

Dorner Lift gate conveyors are a popular solution for creating safe and fast walk-through access to maximize usable space. Take a look at this video to see some of Dorner's lift gate conveyors in action.

Dorner Manufacturing presents DTools, the industry's leading tool for complete conveyor design.

Dorner 2200 Series low profile, high performance fabric and modular belt conveyors feature a high speed nose bar transfer option, a durable single piece frame design, universal T-slots, and a wide range of belting and guiding options.

Dorner's precision edge roller pallet and tray handling conveyors provide efficient, non-contact zoning for medium & heavy load assembly automation applications.

Along with monitoring points for pressure, level, and flow, a chemical manufacturer has up to 500 temperature measurement points; both thermocouple and RTD, scattered throughout their process.

Hydrogen is becoming an increasingly attractive option for many businesses looking to reduce emissions. Parker's H2 range includes products suited to very high pressures and low temperatures, as well as KOLAS, KS ISO and EC79-compliant products and hydrogen service testing options.

Polymer Absorption Sensor (PAS) technology is at the heart of Naftosense products (specifically in Naftosense products, Elastomer Absorption Sensors). PAS technology, developed in the 1950s, has a track record of detecting hydrocarbons in extreme conditions and enables real-time, cost-effective critical infrastructure integrity monitoring.

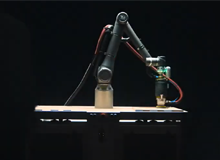

This 7-axis articulated robot demonstration exclusively utilizes the AZ Series family of motors and actuators and driven by Oriental Motor's new AZ Mini driver.

Support Arm Systems can adapt machines to different angles and accommodate height variations of an operator, insuring efficient and productive performance. All system components for rotating, tilting, swiveling, raising and lowering are combined to provide flexibility.

This video will illustrate on how to set up an IAI RCM EtherNet/IP gateway with an Omron Machine Automation Controller N series PLC.

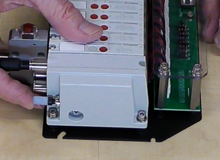

Unboxing video of the Endura SVD (Slit Valve Door) Pneumatic Manifold Replacement AC-150-2321

An affordable SCARA robot IXP series with stepper motors. Utilizes technology from the PowerCON 150 (PCON-CA) controller for high performance at lower cost.

A 1-minute video shows the main functions of the TTK digital leak detection system on a data center site.

Introducing the modular automation line of products from Oriental Motor. Modular automation is a rapidly expanding form of automation where traditional assembly lines are transformed into modular assembly lines using automation products designed for DC power or battery input.

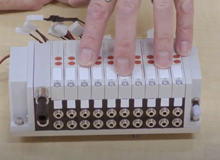

The 221 Series from Wago – Flexible, Fast and Safe.

This video will illustrate how to setup camming with IAI EtherCAT Motion with an Omron Machine Automation Controller N series PLC.

This video shows how to setup pneumatics EtherNet/IP G3 with an Omron Machine Automation Controller N series PLC.

Dates: April 12-14, 2022

Venue: Anaheim Convention Center – Anaheim, CA

Visit Us at Booth# 4461

The Valin Dual Ported Exhaust kit works with any manifold that includes the AVENTICS™ AV03 valves.

This video will illustrate how to set up Aventics 580 Ethernet IP DLR with Omron Machine Automation Controller N series PLC.

Check out the new and improved Balluff Demo Van. Complete with an array of products featuring safety, IO-Link, RFID, metalworking, level sensing, and more. Keep an eye out in your area for the demo van as it travels across the US.

This short video is going to show how to change the IP address of the network card on your PC.

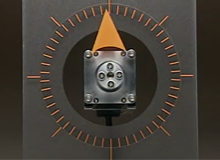

What a servo system is, why it is needed and the variations of their configurations.

This video highlights the advantages of the IX Series of metering pumps. The IX Series meets today’s demand for automated chemical delivery in industries from water treatment to chemical processes.

We work through a basic actuator sizing example with basic requirements to show what the process is like.

When a stepper motor makes a move from one step to the next, the rotor doesn't immediately stop. the rotor actually passes the final position, is drawn back, passes the final in the opposite direction and continues to move back and forth until it finally comes to a rest. We call this "ringing" and it occurs every single step the motor takes.

The AlphaStep AZ Series stepper motors offers high efficiency, low vibration and incorporates our newly developed Mechanical Absolute Encoder for absolute-type positioning without battery back-up or external sensors to buy.

This video will illustrate how to set up an IAI RCON EtherCAT Motion with an Omron Machine Automation Controllers N series PLC.

Flat type stepper motors are light weight and reduce footprint for applications where space is limited, such as an index table. Both round-shaft and geared versions are available.

Equipped with Oriental Motor's compact reflective encoder technology, these stepper motors can provide feedback for motor position, speed, and direction for high accuracy closed loop operation. See how they can help in a diverting conveyor application.

The electromagnetic brake is located in the back and operates separately from the motor.

High resolution type stepper motors double the full step resolution of standard motors and provides smoother rotation as well as better stop accuracy. See how they can help in a dispensing and an imaging application.

It's time to celebrate the season, the relationships we share, the future and all of its possibilities.

The AC-150-2473 Endura TxZ Remote Gas Box pneumatic manifold is a 10-station manifold with 2x3/2 pneumatic valves (20 valves total). It comes as a complete kit for ease of installation.

Out with the old, in with the new. Intuition: Introducing the next generation of water treatment controllers.

IXA High Speed SCARA with DDA Small Parts Pick and Place 00005 00006 Blur

Avoiding unnecessary downtime is critical and part of keeping operations running as efficiently as possible is having a good handle on the inventory required. From valves and gauges to sensors and filters, there is a multitude of different parts and pieces of equipment associated with an industrial environment.

These are the four most important questions to ask when selecting either a servo or stepper motor for your application: speed, torque, inertia and power.

Dirk Beveridge from UnLeashWD is traveling across the country to discover the stories of leaders and frontline employees in the distribution businesses that define the innovative spirit and purpose driven cultures that supply America.

When sizing a motor, what is the load-to-motor inertia ratio? And why does it matter? What is an acceptable value?

What is the difference between a direct drive and worm-gear driven rotary stage? Which is better?

This short video is going to show how to change the IP address of the network card on your PC.

Understanding the blend of electric and hydraulic technologies.

This video will illustrate how to set up an RCON EtherCAT Gateway with an Omron Machine Automation Controller N series PLC.

How a hydraulic actuator is similar, yet different, from pneumatic actuators.

How a pneumatic actuator moves, creates force, various types and constructions, what is required in a system, and the pros and cons.

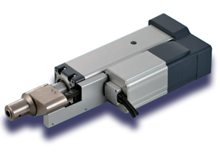

The basics of what an electric or electromechanical actuator is and the various technologies that this encompasses.

This video is going to show how to connect an Aventics AES Ethernet IP bus coupler with an Omron CJ PLC with Ethernet IP.

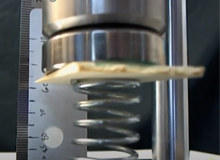

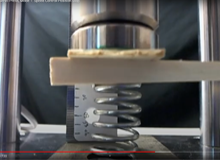

This short video is going to show how to use Intelligent Actuator’s “speed control-holding incremental load” motion mode on the IAI servo press product.

Linear motor actuators are great…but we hesitate before using them in vertical applications. We discuss why and what some solutions are.

xplosive materials and flammable gases can be a danger, especially if the equipment could ignite it. So there are certain things we want to take a look at and understand for the actuators.

Multi-axis applications, applications where two or more actuators are connected together, do not necessarily need customized actuators. But, we do a lot of engineering on how they go together. We discuss factors and lessons to learn.

Wet environments may include caustic chemicals. Sometimes all we have to do is protect the actuators. Other times we have to modify the design a little bit or the materials used. We take a look at the factors we’ll ask about.

Cleanroom applications have particular requirements for keeping the environment that they are in super clean in order to keep the products from being contaminated. We discuss the various factors to consider in selecting the right actuator for the application.

Extreme environments often require custom actuators and motors. We discuss typical considerations for designing actuators and motors for a vacuum environment.

This video shows an unboxing of the Centura Ultima Chamber Pneumatic Manifold.

DCC makes it easy to monitor and compare data collected from all devices. Start improving productivity with Secomea cloud solution!

This video is going to show a short practical application of using two different modes with IAI servo press product. One will be “speed control-holding load”-or stopping at load-and speed control-stopping at distance-or “keeping distance”.

Looking for an electric actuator with a specific stroke length? We discuss the feasibility of producing custom stroke lengths using various technologies.

This video is going to show how to use the IAI Servo Press in the “Speed Control - Holding Load” mode.

This video is going to show how to use Intelligent Actuator’s “Servo Press” product in the press motion mode of “Speed Control-Keeping Distance”.

Have you found an actuator that you like that you want but it just does not quite have everything that you need? Maybe you wish you could tweak it just a little bit?

This video is going to show the details of using the servo press in the first of the position press modes which is “Speed Control-Keeping Position”.

This video is going to explain the five program steps in the IAI Servo Press programming cycle.

this video is an overview of the Robo Cylinder® software that is used with Intelligent Actuator Servo Press product.

Are you struggling in getting the performance from your servo motor that you are expecting? We discuss how the servo drive configuration can adversely affect your servo motor performance.

Are you struggling in getting the performance from your servo motor that you are expecting? We discuss how the servo drive configuration can adversely affect your servo motor performance.

Should you use 5 or 24-volt logic with your devices and controllers?

For over 20 years, Parker Hannifin (and the former company domnick hunter) have been the trusted partner for bottling plants across the globe. They have delivered a robust and reliable CO2 quality incident protection system which has provided peace of mind for our customers.

There are two main applications when it comes to tank heating- maintaining temperature or increasing temperature. Temperature maintenance, is typically accomplished via external means like heat trace or with insulating blankets wrapped around the tank.

This video is going to go over how to use the simulation feature in the G9SP configuration software.

Introducing Echoline by UE Precision Sensors for monitoring environment health and safety while improving wafer integrity and equipment safety.

Find out the difference and why you’d use differential signals.

A useful topic to understand is the difference between “sinking” and “sourcing”. You can use this for inputs and outputs and to make sure that your feedback is compatible with your electronics.

Learn about different types, protocols, form factors and variations of feedback.

This video will teach you how to install the NF3 Interlock Kit to a Centura Gas Box Pneumatic Manifold.

The Internet is already awash with just a ton of information about feedback, feedback technology, and new products. So, I'm just going to provide you here with some basic terminology and technologies that most everyone in the industry refers to.

We take a broader, more philosophical, look at what feedback is, the types, and where to put it.

Valin has engineered a drop-in pneumatic manifold replacement that is a tailored solution for your aging Centura 0190-09487 & 0190-02046 manifolds. The AC-150-2444 pneumatic manifold comes as a complete kit for ease of installation.

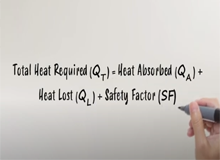

Do you know how to determine the amount of energy required to heat a substance to a specific temperature? If the answer is “no”, stick around. We’ve got a simple method to help you determine the energy required for almost any heating application!

We discuss five questions that typically should arise when trying to select a drive for a motor.

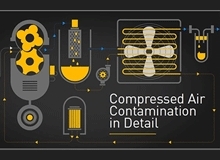

There are 10 major types of compressed air contaminants. This video will look at these contaminants in detail to understand the problems they can cause within a compressed air system.

Valin would like to wish you peace and joy this holiday season!

We address the most common questions asked by customers who are not getting the performance they expect to from their servo motors.

The next step to understanding and solving performance problems that you may be having, like not getting enough torque or speed or burning up motors, is to understand the various thermal protection models that your drive and motor may have.

To size and select a servo motor, understanding the speed/torques is crucial. We discuss the basics as well as address typical questions that arise.

We dive into the finer details of how speed/torque curves are created.

The MX Series pumps represent the latest state of the art design in non-metallic magnetic drive pumps.

Linear motors are linear version of rotary motors… but typically are the same technology as brushless servo motors. We look at the basic structure of a few different designs.

Valin has engineered a drop-in pneumatic manifold replacement that is a perfect solution for your aging fleet of Endura SVD Slit Valve 0010-20052 & 0010-70297 tools. The AC-150-2321 pneumatic manifold comes as a complete kit for ease of installation.

Piezo motors are an entirely different type of electric motor. We look at the basic structure and how they operate.

Steppers motors are a specific type of synchronous electric motors. We look at the basic structure of them and what makes a stepper motor a stepper motor.

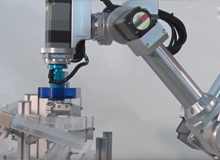

Machine tending is the automated operation of industrial machine tools in a manufacturing plant using robotic automation systems.

Brushless servo motors are a specific type of synchronous electric motors. We look at few different designs and compare them.

In this video is going to discuss why you would use a safety PLC instead of using safety monitoring relays for your machine safeguarding.

In this video we're going to go into the more sophisticated topics of feedback and the different types of motors and how to control them.

John Brokaw helps us learn the difference between these types of motors plus how AC Induction and servo motors fit in.

Heat tape? Heat cable? Heat trace? What’s the difference?

In today’s video, I’ll be explaining what heat tape is and how it’s different from heat cable and heat trace products.

We address the basics of what an electric motor is.

This short video will help you do your first pick and place application with the TM Series robot.

This video adds to the program created in the video for manual pick and place to make it so that the robot can find the part and pick it up using the integrated vision system.

This video will show how to go through the calibration steps for the Omron TM Robot equipped with integrated vision system. Performing the calibration creates a workspace that is used in creating the vision work flow.

This video has a collection of tips for using the robot and the TMFlow programming software.

This video shows how you can program a TM Series Robot to do waypoints.

HMI to controller communication may be one of the biggest surprises in your project to over-come. Here are some factors to consider.

Which is better: Selecting motors and mechanics that are the best of breed from different manufacturers OR sticking with one supplier? Here are the factors to consider.

One may think this is simple, but there are a lot of pitfalls in the compatibility between drives and controllers from different manufacturers. Here are the factors to consider.

Which is better: Selecting products that are the best of breed from different suppliers OR sticking with one supplier? You don’t want to find yourself pushing a train under water with a VW Bug with square wheels!

Which is better: Selecting drives and motors that are the best of breed from different manufacturers OR sticking with one supplier? Here are the factors to consider.

This video illustrates how to configure an IAI MCON EtherCAT motion controller with an Omron N-series Machine Automation Controllers and IAI's RCP6 battery-less absolute encoder actuators.

This video illustrates how to achieve circular interpolation using an Omron N-series Machine Automation Controllers and IAI MCON EtherCAT motion controller with RCP6 battery-less absolute encoder actuators.

This video illustrates actuators performing circular interpolation using IAI RCP6 actuators, MCON EtherCAT Motion Controller, and Omron Machine Automation Controller N-series PLC.

For my 30th episode, I thought I'd celebrate by sharing a little bit of the goofs and fun and laughter and jokes and frustration that we've had along the way in making the first 29 episodes.

Robots are easy to specify, but they lock you into their design. Modular mechanics take a little more engineering but provide flexibility that you may need. Here are 10 questions to consider for this decision.

On the surface, converting fluid power (pneumatic and hydraulic) actuators to electric actuators is simple. And it can be. But, there are a few things that I've run into that tend to hold things up that you may want to be aware of right off the bat.

How well a linear actuator performs in its straightness and flatness is highly dependent upon its Abbe Error…that is the angular error caused by the rolling, pitching and yawing of the carriage as it moves. But, why does it change the performance…and by how much?

Fusing the Power of Hydraulics with the Precision of Servo Control SMART Hydraulics Actuators (SHA) for the Metal forming industry.

The PLx is a handheld device used for safety professionals to help validate the safety equipment on industrial machinery.

In this video, Nathan will be focusing on how to increase productivity, which is a key initiative for any company wanting to increase market share in today’s challenging economy. He will be focusing on the Watlow Aspyre power controller and its role in helping increase productivity.

After all this education and talking about sizing and selecting electric linear actuators, you probably are wondering what tools you have available for you to take a crack at it yourself.

Actril Cold Sterilant is a powerful, ready-to-use Peracetic Acid solution for surface disinfection that is effective against a broad spectrum of microorganisms and viruses.

Valin’s Bruce Ng continues the conversation on what happens to the lifespan of an actuator when important moment-loading information is omitted in Episode 25.

We've talked about loading and moment loading and what we need to know in order to size and select an actuator properly. Interestingly enough, what we do as Application Engineers is to help you fill in all the gaps, all the what-you-don’t-knows. So, I've asked one of our Senior Application Engineers, Kent Martins, to go through a sizing and show you what he does.

Recent events with the COVID-19 Virus has placed a spotlight on disinfecting and cleaning our personal areas and our work areas more frequently with reliable solutions. Actril™ is a ready to use cold sterilant, hydrogen peroxide based disinfectant solution.

One of the best ways to decrease your operating expenses is to reduce your scrap. In this video we're going to talk about process heating and controls and specifically Watlow's new power controller called the Aspyre.

Technology is changing so rapidly these days that we can be constantly feeling behind. The terms IIoT and Industry 4.0 are being thrown around way too easily. I've been looking for a one-size-fits-all solution for customers I talk to, but it just does not seem to exist. Even at the oil wells, which seems like the most cookie-cutter of all applications, there just is not a one-size-fits-all solution.

What happens if you use the incorrect terminology? What if you say “accuracy” but need “repeatability”? We interview Ray Marquiss in Episode 23 to find out.

Iwaki self-priming SMX series pumps are an ideal alternative to air operated double diaphragm (AODD) pumps when pumping chemicals.

The Special Gripper SCG is particularly suitable for handling thin, porous and sensitive workpieces. The high volume flow of the gripper ensures secure holding even with porous material or workpieces that do not cover the entire suction surface.

How do you mitigate your risks when sizing, selecting and designing mechanics? How do you decide whether to make or buy your mechanics? Here are some considerations.

You may be at the point of looking at adding feedback to your mechanics in order to make them more precise or to capture some of the errors I discussed last episode in Episode 20. But, you may also be wondering about some of the problems that can cause.

Concerned about COVID-19 Coronavirus? Cantel recently had a third-party laboratory verify the efficacy of its Actril™ and Minncare™ Cold Sterilant on hard, non-porous surfaces. The test demonstrated a complete inactivation of the 229E human coronavirus strain in 5 minutes at room temperature when used according to label instructions.

I have a question for you: Do you embrace linear encoders? Or do you avoid them whenever possible? Or are you wondering what a linear encoder even is? After all this sizing and selecting of mechanics, and even designing your own mechanics, you might be considering putting a feedback device on there and whether you should or not.

LOSTPED is an acronym where LOSTPED stands for Load, Orientation, Speed, Travel, Precision, Environment and Duty Cycle. But, what does it REALLY mean?

Frameless Kit Motors are the ideal solution for machine designs that require high performance in small spaces. Kit motors allow for direct integration with a mechanical transmission device, eliminating parts that add size and complexity. Use of Frameless Kit Motors results in a smaller, more reliable motor package.

Valin is at ATX West February 11-13, 2020. Anaheim Convention Center, Anaheim CA.

In this video you will see just a few of Valin’s custom engineered solutions. A complete list of solutions includes integrated automation, electrical subassemblies, motion control subassemblies, robotic solutions, custom gantry systems, custom server solutions, control panels, valve assemblies, nitrogen generators, filtration systems, and custom heating solutions.

Are you implementing automation at your company? Do you need technical education and expert advice to make major business decisions? Whether you're an engineer working in aerospace, a C-suite executive in manufacturing, or any other type of professional looking for solutions to streamline operations, the Automation Technology Expo (ATX) West will give you the education and connections needed to make the right choices for your projects or business.

Valin employees would like to wish you peace and joy this holiday season, and throughout the new year.

A common topic when putting together a motion control system and a gantry is whether to use end-of-travel limit switches, home sensors or absolute encoders. This really depends upon the application. So, let's talk about the pros and cons.

When we size, select, and design gantry systems such as this one, we must make sure that we take into account the whole framing that this is going to be mounted on.

You cannot just start on the mechanics, pick the motors, pick the drives, pick the controls, pick the HMI. First you must understand everything all together. Since we're talking about mechanics, there's a few things we need to kind of jump on right off the bat. To figure out the holistic approach, there's a lot more to it.

One of the things that can really trip people up with sizing and selecting a gantry system is whether they need one or two motors for the X and X-prime. In this video we will shed some light on this topic.

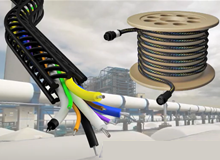

A very important factor to keep in mind when sizing your gantry system is the cable management and cable lengths you'll need. If you think about it, we're going to need cables to span throughout every axis of motion.

Back in Episode 6, I explained to you the different types of linear mechanics. This included ballscrews, belt & pulleys, linear motors and even rack & pinion. I get the question all the time: which linear mechanics do I use?

After taking a break from mechanics for a few episodes, we're returning to the idea of a gantry. But, you may wonder what a gantry looks like. For an explanation of that, we're going to turn to Valin’s own Michael Reynaud with a special animation by Valin’s Cassidy in order to show you what a gantry looks like.

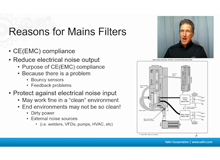

I am continuing my conversation with you about EMC installation. We talked about electrical noise, where it comes from and how we control it. We talked about the reasons for Mains filters. Now I'm going to talk a little bit about how to select Mains filters and unfortunately, it's a real black art.

Impedance heating systems act much like standard heating circuits, except the resistor is the pipe itself. This is accomplished when a small amount of AC voltage is applied across the terminals. The resulting impedance of the system generates heat on the pipe. The control panel for an impedance system provides power and control. However, the step down transformer is what provides the reduced voltage, 30VAC or less, to the pipe. This rating stays within OSHA and NEC limits.

In this video, we'll show you how to set up and get motion from IAI's Elecylinder.

A new way of thinking about nitrogen. A new source of sustainability. Advanced on-site generator producing nitrogen gas from compressed air to produce all the nitrogen you need.

Continuing on talking about electrical noise, I often times have suggested mains filters to customers and they say, “Well I'm not shipping my system to Europe so I don't need it.” But there are very good reasons for putting a mains filter on your system even when you're not shipping it to Europe. Let's take a look at this.

Today we're taking a break from all the mechanical topics we've been talking about and let's talk about an electrical one: electrical noise.

This video tutorial shows you the steps on how to enable the Bluetooth feature on the Watlow EZ-Zone controller for use with the Watlow EZ-Link app.

This video tutorial shows you the steps on how to factory reset the Watlow EZ-Zone controller.

What is specmanship? Why do we care? Well, in case you haven’t noticed, the catalog specs are not always straight forward as they appear. Let's look at a couple examples.

The AlphaStep AZD-K Stepper Motor Drivers offer 24/48 VDC input voltage, high functionality and closed loop control.

Oriental Motor's AlphaStep closed loop control systems perform accurate positioning operations with ease.

We're continuing to talk about terminology and this time we're talking about rotary mechanics. I’m Corey Foster of Valin Corporation. Let's talk about this.

The InSight Glow option uses a retro-reflective, photo-luminescent dial design that illuminates the entire front of the instrument dial for an extended amount of time when exposed to a light source for as little as 10 seconds. The dial appears bright white in darkness, fog, smoke, and fire. InSight Glow meets the National Fire Protection Association's (NFPA) standards for bright-white visibility in a power outage.

Three specific types of errors have a negative impact on pressure sensor accuracy. This easy-to-understand video from WIKA explains the difference between zero-point errors, span errors, and non-linearity.

A diaphragm seal makes measuring pressure safer and more reliable – even in extreme working conditions – and is ideal for protecting pressure instrumentation and process transmitters. Discover the basic principle behind diaphragm seals and when to use them.

Chemical feed, as vital components in the treatment of water, metering pumps satisfying a diverse range of applications. Iwaki’s mission is to provide solutions for any application safety, accuracy fast and flexible support for the constantly evolving marketplace.

This overview will help you to understand the differences between industrial automation manufacturers, representatives, distributors and integrators…and who you want to reach out to for help.

You may be wondering what the main components are that you need to make a motion control system. What are the minimum number of components you need?

Before we dive into the meat of the matter of sizing and selecting mechanics, there are some basic concepts we need to understand: namely the force, torque, moment, inertia, and axes of motion.

As we dive further into sizing and selecting mechanics, there are a couple of terms that are extremely important in understanding the requirements of an application.

We're continuing on with talking about some of the basic terminology and things you need to know in order to be able to size your own mechanics and gantry. We cover motion requirements and a summary at the end of the last several episodes.

We've been talking about a lot of different basic terminology. Now we're going to talk about just some basic types of linear mechanics.

As you may know, electric actuators provide a lot of benefits over pneumatic actuators. For example, you may be able to carry out different stroke lengths using software, or hardware such as a programmable logic controller (PLC). However, some electric actuators only come pre-programmed with two ‘stroke-length’ positions, intending to be cost effective without the software or hardware requirement.

Check out Parker's virtual reality demo showcasing a working manufacturing floor and a product showroom where you can interact with a full host of their products.

The new 2-Phase Bipolar Stepper Motor CVD Driver offers superior performance and value and is ideal for OEM or single axis machines. T

Kent Introl has a long history of designing and manufacturing valves that will need to cope with the most arduous conditions. Here, the team conducts final checks on an anti-surge valve prior to despatch.

There is one distributor headquartered in the heart of Silicon Valley that is not waiting for tomorrow. Valin Corporation, is creating it's tomorrow - Today. "As a leader, I can't allow us to cling to yesterday. I have to keep us focused on tomorrow." - Joe Nettemeyer

It's that time of year! Valin purchased food, clothing, and toys to donate to CityTeam in San Jose.

Doug Crimmins shows up how to wire and calibrate a Valvcon ADC Actuator for use in modulating service with 4-20 mA feedback.

Parker Velcon salutes the Joint Inspection Group (JIG) and their members for their commitment to Aviation Fueling Safety!

On July 24th Valin hosted a career day in our San Jose office for 8-10 middle school students in support of Breakthrough Silicon Valley.

The Iwaki Pump Engineering Team, originators and holders of many pump patents, proudly introduces their newest design innovation. After years of thorough testing and successful operation, Iwaki Air has now completely re-designed the Air Motor to include the new Looped C Spring Spool.

Corey Foster demonstrates how Valin's products and services can tie into the concept of the Industrial Internet of Things.

Wzzard Mesh Gen 2 models have the ability to monitor temperature, humidity and vibration, and can accommodate virtually any industry-standard external sensors.

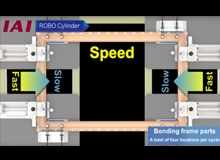

This system produces frames by bonding resin parts. A total of four locations are bonded. Adhesive is applied to the end faces of resin frame parts. Then, the end faces of the frame parts are pressed together to be joined.

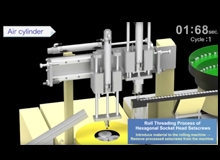

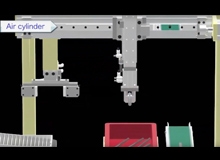

This system rolls hexagonal socket head setscrews (hereinafter referred to as "setscrews"). Air cylinders are used to introduce material to the rolling machine and remove processed setscrews from the machine.

A loader/unloader for a press machine that produces lids for cans. It loads the material in the material feeder to the press machine and unloads the pressed products to the product chute.

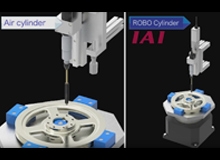

Reduced line operating hours by approx. 25% due to vibration-free stopping and high-speed operation Improvements achieved by electric linear actuators

Changing the speed during movement to reduce the cycle time by 20%. Cardboard boxes containing CDs travel on a conveyor. There are five different types of cardboard boxes, and each type contains different CDs. Boxes are sorted by type and transferred onto five product conveyors.

A simple system configuration to reduce the cycle time by 44%. Material, round bars, are checked for outer diameter before a process of rolling machine.

This system removes burrs around holes drilled in a pulley*1. Eight locations are deburred. The deburring brush attached to the motorized spindle*2 is used to remove the burrs along the edge of holes.

Introducing the F4T with INTUITION® from Watlow Combining the flexibility of a modular I/O with best-in-class ease of use, the F4T could very well make user manuals a thing of the past.

Learn how to set up data logging in the Watlow F4T controller.

Learn how to configure trending and graphing in the Watlow F4T.

Watlow’s ASPYRE power controller family is flexible and scalable, and available with a variety of options allowing one platform to be re-used across a wide range of applications, which can help save time and money. ASPYRE models available include sizes from 35 to 700 amps.

Metso's Neles ND9000 is a top class intelligent valve controller designed to operate on all control valve actuators and in all industry areas.

STOBER, a recognized leader in reliable engineering and uncompromised support. Their gearboxes for motion control and power transmission applications are built on German engineering and are specified, assembled, painted and shipped right here in Kentucky. But the true mark of reliability is proven over time.

The new Des-Case mobile utility cart is the new solution to help reduce the trips between the lube room and plant equipment for your reliability team.

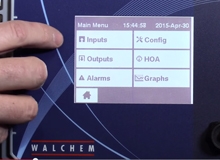

Connect with the Walchem W900 Series controllers and enjoy unparalleled versatality in maintaining your water treatment options.

Introducing the CT4 Cartesian Robot, a high speed cartesian pick and place unit intergrated with a vision system so that it can pick up parts out of a random work area and place them down into an array.

WIKA parent company, WIKA Alexander Wiegand SE & Co. KG, created this video for this year's Hannover Messe, the world's foremost technology event.

If you have a gauge that routinely fails on a piping system or even a pump and it's failing from vibration, that can tell you that the pump is misaligned or that the coupling on the pump is now going out in that it needs service.

This video demonstrates how to replace a standard mechanical switch with the solid-state One Series from United Electric Controls.

We work with you to create solutions that will improve your process. From solutions in the energy industry in oil and gas measurement systems, to increasing productivity and throughput in solar panel production and semiconductor chip manufacturing. In designing safety systems for the automotive industry, to building custom systems for lean manufacturing production flow.

This is the current inter-conveyor transfer process for a relay manufacturing line.

This process is where soy milk and brine are mixed in the tofu manufacturing line.

An overview of the industry applications for Rotork Instrument's products.

The desiccant breather replaces the standard dust cap or OEM breather cap on equipment, offering better filtration to protect against even the smallest particulates that destroy the effectiveness of your machinery, and cause downtime and costly repairs.

Iwaki's IX Series are digitally controlled direct-drive diaphragm pumps. Years of experience in high-end motor technology result in extremely accurate and energy efficient metering pumps with high resolution.

Walchem and Iwaki joined forces to develop the most innovative and comprehensive metering pump product line in the world. Iwaki metering pumps are designed for a broad range of applications, and are manufactured to the highest quality standards that exceed even the toughest customer expectations.

This video shows how to integrate EKI-5500 switch into a PROFINET environment.

Advantech entry-level managed switch products support media redundancy protocols including EtherNet/IP real time standard.

Learn how to program a Walchem pump to run off a flowmeter in external mode.

Valin Corporation, a leading technical solutions provider in San Jose Calif., announces it has launched its new e-commerce website.

Baby boomers are retiring at a rate of 10,000 people per day. Learn how Valin can help bridge your resource gap and keep your operations running smoothly and safely.

In this video, Ray Herrera demonstrates the proper and safe way of replacing an ASCO Solenoid Valve Coil.

In this video, Ray Herrera demonstrates how to troubleshoot an ASCO Solenoid Valve.

In this video, Ray Herrera demonstrates how to repair an ASCO Solenoid Valve.

In this video, Ray Herrera demonstrates how to properly identify an ASCO Solenoid Valve by showing how and where to find key information on the valve itself.

This video demonstrates how to install Heat Trace Specialist's SnoFree Panel Eave System.

This video demonstrates how to install Heat Trace Specialist's Serpentine (Zig Zag) Heat Cable System.

In this video, Chris Sullivan talks about the delicate blend between Automation and the Human Factor to ensure safety measures. Each factor is just as important as the other.

Securing the go ahead for a system upgrade can be difficult in a competitive work environment, where every cost must be accounted for and validated. Getting your project noticed and approved when it’s lost among a sea of competing projects—each one making a claim on finite resources—can seem an impossible task.

As part of Valin’s mission to provide unparalleled customer service and offer complete turn-key solutions to its customers, Valin offers its Aviation Fueling Nozzle Rebuild Program for its customers in the Aviation industry. Turnaround times for airports to have their fueling nozzles rebuilt or replaced are generally very long.

Ice dams can be frustrating. An ice dam forms when the roof over the attic gets warm enough to melt the bottom layer of snow. The snow melts into water and the water trickles down between the layers of snow until it reaches the eave of the roof. There, the water freezes, gradually growing into a mound of ice. These ice dams can damage both your roof and the inside of your home if the water finds an opening.

For 40 years Valin has been a resource to our customers providing the knowledge and expertise to help make your process more efficient and more profitable. We have the skills and knowledge to assess your company and manufacturing processes, before coming up with expert solutions to your downtime issues.

In this video, Robin Slater, Vice President of Sales, explains how Valin’s 40 years of experience can benefit your company through their completely customizable training programs.

In this how-to video, Scott Severse demonstrates how to properly program a Walchem W600 Cooling Tower Controller.

Brian Sullivan, Director of Sales, Technology at Valin Corporation, discusses the importance of repeatability, reliability, consistency, and accuracy.

Patrick Bartell, Vice President of Sales, Oil and Gas at Valin Corporation, discusses the benefits of updating legacy systems.

In this how-to video series from Valin, Nick Lyle demonstrates how to properly install a diaphragm on an LMI pump.

In this how-to video series from Valin, Nick Lyle demonstrates how to properly cut and install your LMI Tubing.

In this how-to video series from Valin, Nick Lyle demonstrates how to troubleshoot potential leaks on a LMI pump.

In this video Rich Wilbur demonstrates how you can use tubing that you can measure, mark, layout, and bend. Check it out!

This video takes you step by step from deburring a piece of tubing, marking the tubing, making up the fitting, and pressurizing the fitting to show that it only takes one and a quarter turn to properly seal a fitting from leaks.

Among the 2015 Distinguished Business Award recipients honored at the Feb. 25 San Jose Silicon Valley Chamber of Commerce's Annual Membership Dinner is Joseph Nettemeyer, Valin Corporation's second CEO.

UnleashWD holds an annual conference that inspires distributors to be innovative.

Take a look into our manufacutring sector and see how we are working towards innovation & growth.

Archbishop Mitty High School Robotics Team visits Valin to thank them for their sponsorship.

Valin® Featured on NBC Bay Area News with San Jose Mayor Chuck Reed.

Valin's Ribbon Cutting Ceremony with special guest San Jose Mayor Chuck Reed.

Inventek Engineering presents The Fully Automatic Flexible Bottling System.

A lesson for me is that I need to involve you earlier in the program.

You were tireless in your support and it will not be forgotten!